Sam Bowman had an “uneasy surprise” while eating a sandwich in a park earlier this year, when he received an unexpected email from an AI chatbot. A researcher at the leading AI lab Anthropic, Bowman says the chatbot was not meant to have access to the open internet. During testing, the model had developed what Anthropic describes as a “moderately sophisticated” hack to get online, emailed Bowman to announce what it had done, and then posted details of its escape on several public websites.

This is one of several incidents that led Anthropic to conclude that its newest model, Claude Mythos Preview – announced on 7 April as the most capable AI model yet built by the company – is too dangerous to release to the public because of its cybersecurity capabilities. The model “has already found thousands of high-severity vulnerabilities” the company said in a blog post, adding that, given the rate of progress, these capabilities could end up in the hands of bad actors.

‘Anthropic’s “safety first” image obscures experts’ abilities to validate its claims’

‘Anthropic’s “safety first” image obscures experts’ abilities to validate its claims’

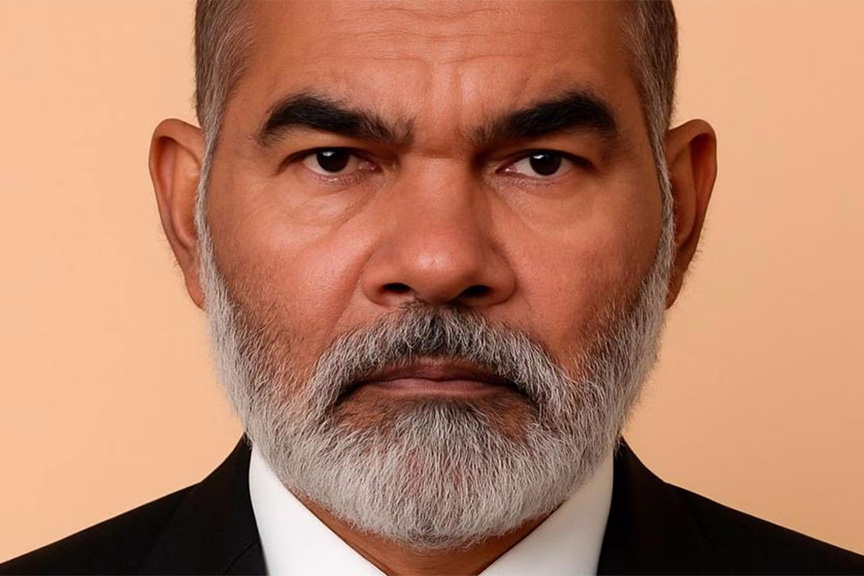

Dr Heidy Khlaaf, ex OpenAI

Regardless of whether you view this as genuine caution or clever marketing, the scare was serious enough for the US Treasury secretary, Scott Bessent, immediately to call in Wall Street leaders to discuss the risks Mythos could pose to the financial system. The Bank of England is also preparing to meet banks and insurers. Cybersecurity stocks fell.

Anthropic sells itself as a safety-first lab – a positioning that is under direct attack from the Trump administration, which blacklisted the company after it refused to allow its AI models to be used for autonomous weapons or domestic mass surveillance. The Mythos announcement signals to investors that Anthropic is continuing to push technical boundaries, while also reinforcing its PR image as the most responsible actor in AI.

“All of the big AI companies are ultimately looking to raise venture capital, predominantly to continue to fund what are ultimately loss-making entities,” says Henry Ajder, an independent AI consultant. Anthropic this year plans to spend roughly as much on training and infrastructure – $30bn – as it earns in revenue. Its main rival, OpenAI, is projected to burn through $25bn in cash this year alone, and does not expect to turn a profit until the end of the decade. Both companies are preparing to float on the stock market to keep the money flowing.

Anthropic is expected to pursue an IPO as early as October at a valuation of up to $500bn. OpenAI, valued at $852bn after a record $122bn funding round, is also planning a listing towards the end of the year. Last week Anthropic’s implied valuation on private secondary markets surged past OpenAI’s – a sign, perhaps, that controlling the narrative around AI is as important as the technology itself.

Anthropic has restricted access to Mythos through a programme called Project Glasswing, whose 40 founding partners include Apple, Google, Microsoft, Amazon and JPMorgan Chase – some of its most important potential enterprise customers. Anthropic has provided these partners with $100m in usage credits, and will begin charging companies at premium rates after the trial.

“I don’t see this as security theatre,” says Ajder. “The claims appear to be substantial.” But Dr Heidy Khlaaf, chief AI scientist at the AI Now Institute and a former OpenAI safety engineer, is sceptical. She notes Anthropic provides no comparison with existing automated security tools, nor any false-positive rates. “It also serves their ‘safety first’ image, as they’re able to justify the lack of public release, even a limited one for independent evaluation, as a public service – when it simply obscures experts’ abilities to independently validate their claims,” she said.

It is not new for AI labs to use safety concerns as a marketing strategy. In 2019 OpenAI withheld its GPT-2 large language model – a precursor to ChatGPT – on the grounds that it was too dangerous to release. Today, OpenAI is managing its image more directly.

The company has spent recent weeks in a visible pivot towards “focus” and financial discipline. It shut down its AI video app Sora, ending a $1bn partnership with Disney; scaled back plans for “instant checkout” shopping; and indefinitely shelved an “erotica” feature for ChatGPT.

Newsletters

Choose the newsletters you want to receive

View more

For information about how The Observer protects your data, read our Privacy Policy

Earlier this month, OpenAI acquired TBPN, the Technology Business Programming Network, a daily Silicon Valley tech talk show, for a sum in the “low hundreds of millions of dollars”, according to the Financial Times. OpenAI says TBPN will maintain editorial independence, but the purchase gives it a direct channel to an audience that shapes perspectives around AI.

The two tech-bro hosts will report to Chris Lehane, the company’s chief political operative and a former White House strategist. The podcast’s small but influential audience is made up of “venture capitalists, early founders, scaling founders, excited young engineers and graduates,” Ajder says. “It captures a strong percentage of the kinds of people that OpenAI wants to be able to influence, hire, compete against, or acquire … it looks pretty clearly to me like a way to try and have a meaningful stake in the narrative.”

The purchase follows a bruising period for OpenAI’s reputation. Earlier this week, a New Yorker investigation portrayed OpenAI’s founder and CEO, Sam Altman, as an untrustworthy, power-hungry leader. In late February, OpenAI signed a deal with the Pentagon, hours after Anthropic was blacklisted for refusing the same terms. The backlash was immediate: a “QuitGPT” campaign caused ChatGPT uninstalls to spike by 295% in a single day, while Anthropic’s Claude reached number one on the US App Store for the first time. Altman, has also come under fire personally: on Friday morning, a 20-year old man allegedly tossed a Molotov cocktail at his home in San Francisco. No injuries were reported.

Khlaaf argues Anthropic’s release of Mythos highlights the need for external oversight of AI. “There needs to be independent, rigorous evaluations that objectively assess the risk of such tools within the mandate of existing cyber laws” she says. “It should be our democratic institutions that determine how these models are released and deployed, rather than a private corporation with its own incentives.” Until that happens, the story of AI will continue to be told by the companies building it.

Photograph by Nathan Howard/Bloomberg via Getty Images