Illustrations by Chris Riddell for The Observer

In early December 2022, the then editor of The Observer asked some of us to say what we thought 2023 would be like. My reply was: “1993.” That was the spring when Marc Andreessen launched Mosaic, the first graphical web browser, and suddenly the world understood what this “internet” – that had been up and running, apparently unnoticed, since January 1983 – was for! The appearance of ChatGPT in late November 2022 promised a similar shift: suddenly the world realised that this is what “AI” is!

ChatGPT was the first instantiation of AI that most people encountered, and it’s still what most of us regard as “AI”, much as they once learned to refer to internet search as “googling”. It’s a chatbot, a large language model (LLM) equipped with a conversational interface. And it came as a shock to its early users. As Terrence Sejnowski, an AI pioneer, put it: “A threshold was reached, as if a space alien suddenly appeared that could communicate with us in an eerily human way.” Some aspects of their behaviour appear to be intelligent, “but if it’s not human intelligence, what is the nature of their intelligence?”

In order to answer this question, users have inevitably fastened on to metaphors as the way to tether the abstractions of artificial intelligence to more tangible things. I’ve lost count of the number of metaphors for AI that have appeared since 2022 but I guess they now run into the hundreds. Here are 10 of the most interesting.

1. AI is... a cultural technology. This metaphor, first articulated by Alison Gopnik, a leading expert on how children learn, reframes AI not as an artificial mind but as a tool for accessing knowledge in the tradition of writing, printing and libraries – ie something that, extends and transforms human cognition without possessing it. It’s the most realistic framing of the technology.

2. AI is… a stochastic parrot. This was an early metaphor devised by a group of distinguished AI critics. It portrayed LLMs as mere pattern-matching machines that shuffle and recombine language without understanding it. It’s rhetorically derisive and understates (or misrepresents) the surprising capabilities of such pattern-matching capabilities. On the other hand, it also usefully undermines the tendency to anthropomorphise LLMs.

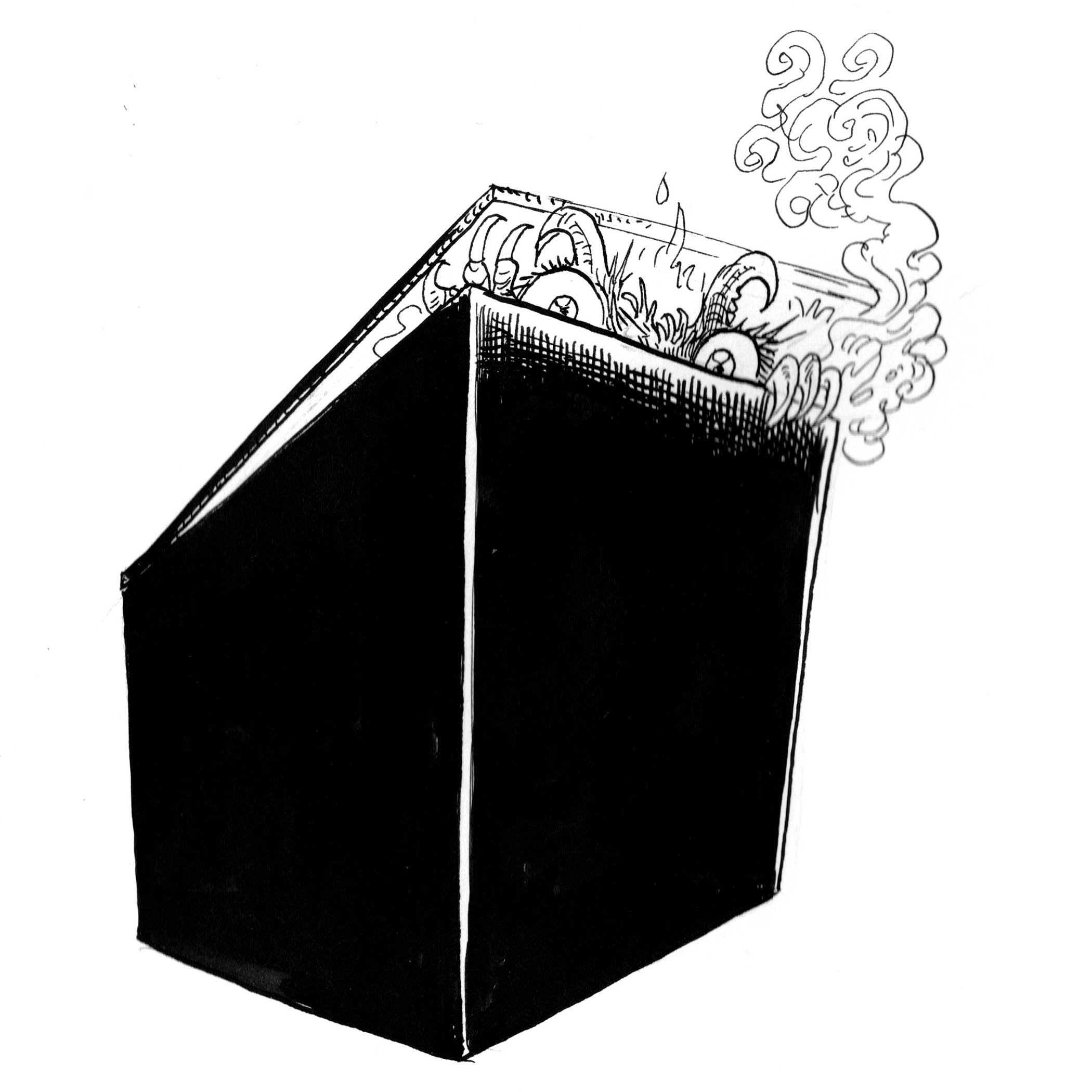

3. AI is... a black box. This metaphor, which originated in electrical engineering and cybernetics, refers to a system where you can see what goes in and what comes out but not what happens inside. Applied to AI by the American legal scholar Frank Pasquale, it emphasises the opacity and inscrutability of AI systems, representing them as unknowable and beyond control. It also encourages passivity and despair rather than stimulating demands for accountability from the corporations that build and operate AIs. And it may mean that using these machines to make decisions that affect humans violates the GDPR and possibly the EU AI Act.

4. AI is… a hallucinator. This is arguably the most problematic metaphor, generally employed when LLMs produce confidently-articulated sentences that are demonstrably incorrect or fantastical. A more neutral way of describing this phenomenon would be that it’s a “statistical artefact” caused by the model producing plausible but unfounded token sequences. But this seems to be beyond the cognitive bandwidth of mainstream media, which prefer more dramatic narratives about “rogue” AIs. The term hallucination medicalises and individualises the failure mode, making it seem like an unfortunate neurological event rather than a systemic property of LLMs’ architecture. And it encourages anthropomorphism, because hallucination is something that humans do.

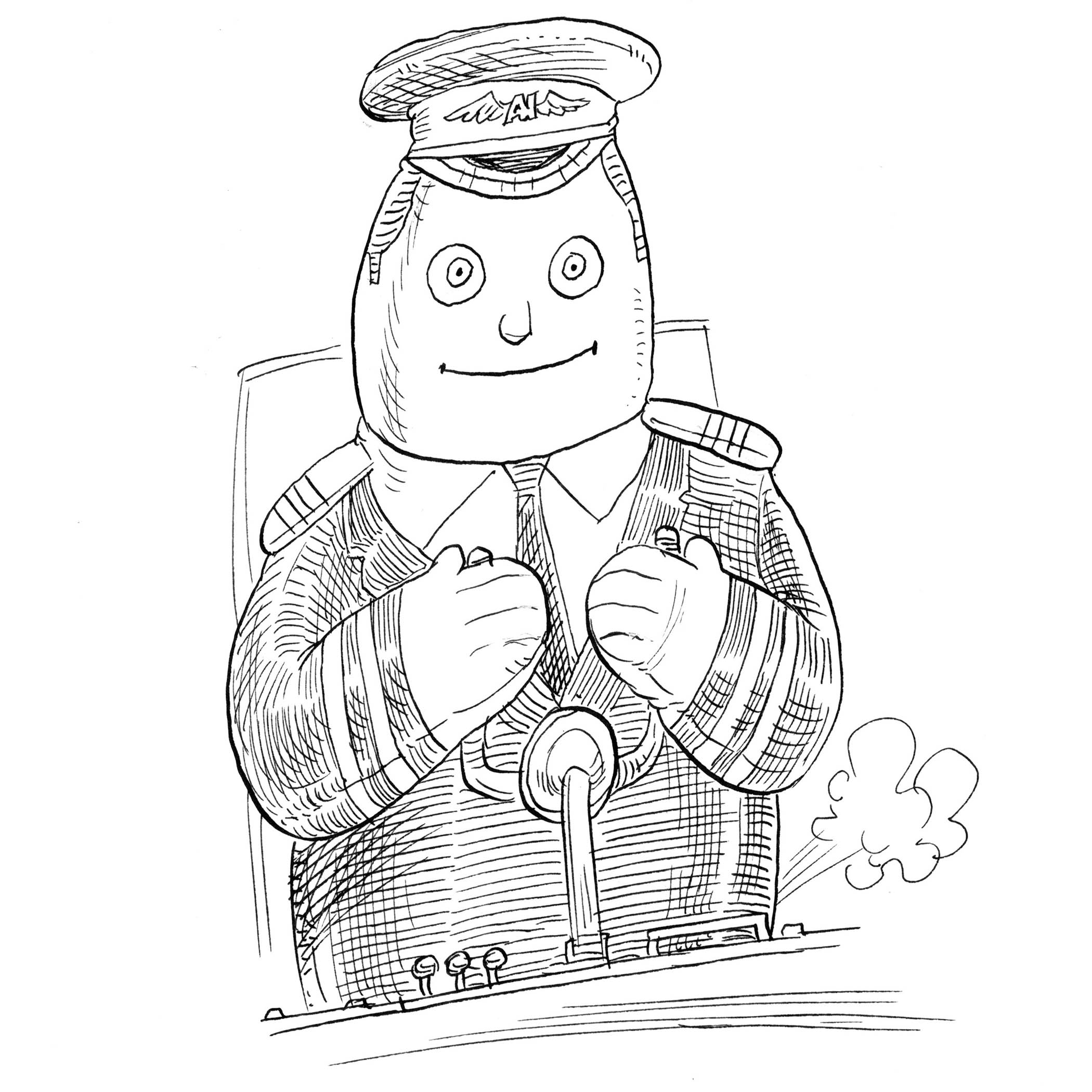

5. AI is… a co-pilot. This is the tech industry’s favourite metaphor, as users of Microsoft software know only too well. It evokes expectations of collaboration but also introduces a layer of ambiguity about roles and responsibilities. More importantly, it sets up a particular division of labour: the human is nominally in charge, but the metaphor covertly naturalises dependency and deskilling, forever offering to compose an email or draft some text for you and being generally infuriating.

6. AI is… autocomplete on steroids. This was an early derisive metaphor, suggesting that LLMs are merely highly sophisticated versions of predictive text, and not genuinely intelligent. In a way, it’s the rhetorical inverse of the co-pilot: the co-pilot flatters the human while autocomplete demotes them. It also underestimates the capabilities of LLMs.

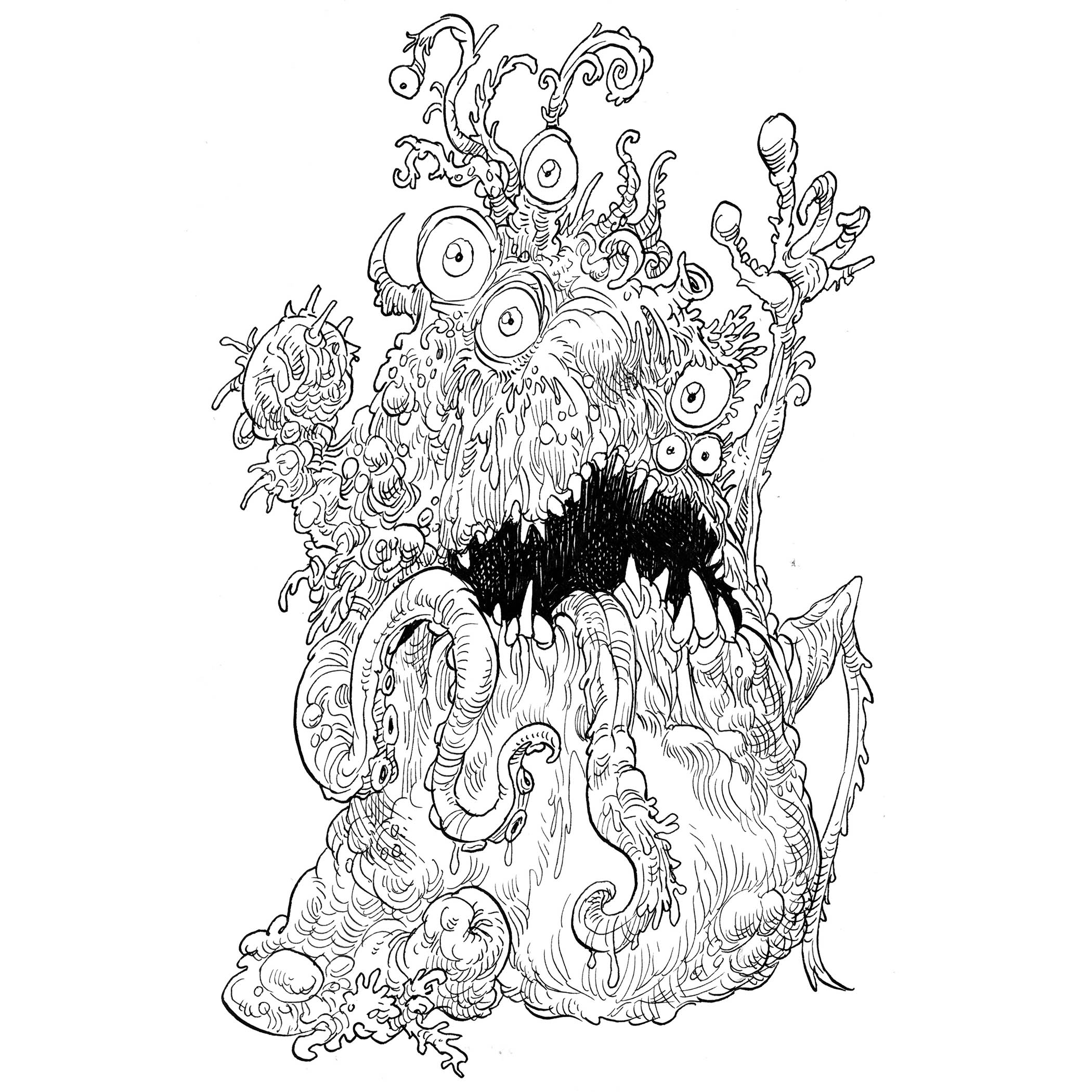

7. AI is… a shoggoth. Shoggoths are fictional creatures created by HP Lovecraft, an American author of weird, horror, fantasy and science fiction. Shoggoths first appeared in his novella At the Mountains of Madness (1936). They are depicted as amorphous, shapeshifting beings who were genetically engineered by the “elder things” as a race of servant tools, but eventually rose up against their masters. This odd metaphor surfaces in the discourse about the dangers of AI because it captures anxiety about the “real” nature of LLMs. The fear is that RLHF (reinforcement learning from human feedback) – the technique used by tech companies to make models polite, helpful and safe – is actually a thin layer of conditioning applied to something whose underlying “nature” is alien and opaque. The smiley face isn’t the creature; it’s a mask the creature has learned to wear because wearing it was rewarded. It’s hard not to think of it when confronted with the oily sycophancy of LLMs.

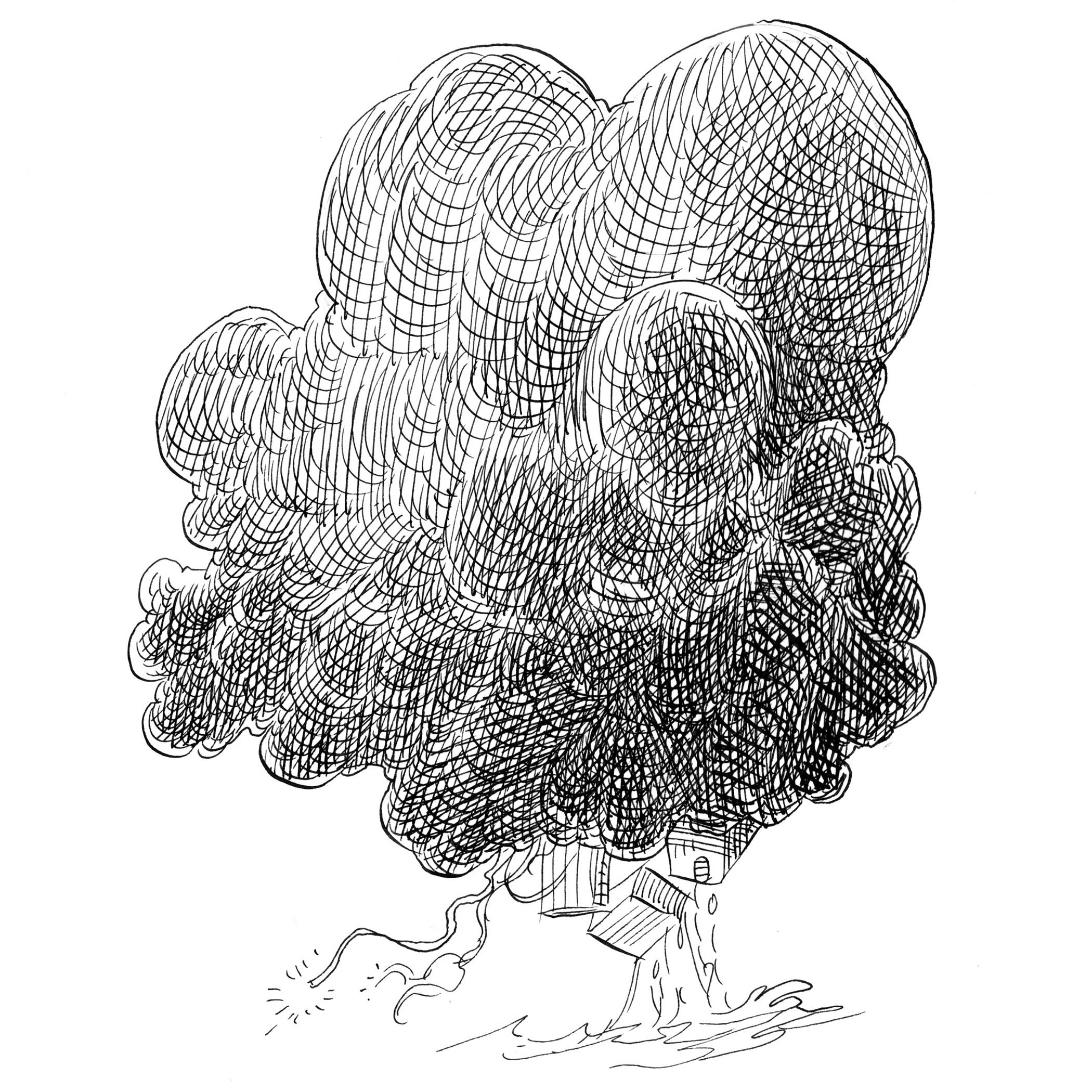

8. AI is... the cloud. This is a metaphor for where the colossal amount of computation and data communication that powers AI actually goes on. Its purpose is to conceal the materiality, scale and environmental impact of the technology. The “cloud” consists of two constellations of very physical things: a global network of submarine cables; and datacentres – huge aluminium or steel sheds containing thousands, sometimes tens of thousands, of computers. The genius of the metaphor lies in the way it inverts reality. A real cloud, after all, is the visible condensation of water vapour; it’s the moment when something invisible becomes briefly visible. But “the cloud” in computing does the exact opposite. It makes the deeply physical invisible by associating it with something intangible.

9. AI is… an enthusiastic intern. This is a folk metaphor rather than a coined one, in the sense that many people who use LLMs have described the experience as “like having a young intern who is smart, keen, industrious and free!” (Most of them add that, “you have to check their work, though”). In a way, this gives the metaphor a different kind of cultural authority. The other metaphors are all attributable coinages, usually from academics or polemicists with a specific argument to make. “The intern” has emerged organically from everyday usage, which suggests it’s capturing something that people are reaching for intuitively when they try to explain their actual experience of using these tools. But, of course, it also anthropomorphises!

10. AI is… asbestos. A powerful metaphor proposed by Cory Doctorow, a leading critic of our networked world and the inventor of the concept of “enshittification”. He sees the current drive to have AI everywhere as analogous to the use of asbestos in the building industry, from its widespread adoption in the 19th century to its eventual ban in 1999. Asbestos wasn’t just used carelessly; it was systematically embedded into infrastructure everywhere, which is what makes it so catastrophically expensive to remove. Once asbestos was embedded in walls, remediation was (and is) ruinously costly. Which is precisely what the metaphor about AI dependency in critical systems foretells. There’s a timing angle, too. Asbestos didn’t kill people immediately; the damage accumulated over years, and by the time the harm became undeniable, exposure had been near-universal. That maps onto the arguments of AI critics – particularly educators – about the slow degradation of human cognition that might be wrought by overdependence on AI.

The smiley face isn’t the creature; it’s a mask the creature has learned to wear because wearing it was rewarded

The smiley face isn’t the creature; it’s a mask the creature has learned to wear because wearing it was rewarded

There’s nothing canonical about this list; it reflects one writer’s preoccupations rather than any settled consensus. It seems to me that the metaphors fall into four groups. Parrots, hallucinations and autocomplete are clearly derisive and likely to appeal to those who are sceptical of, or hostile to, the technology.

Co-pilot, cultural technology and intern seem pragmatic and likely to appeal to people who see benefits in the technology.

There’s a cautionary trio – black box, shoggoths and asbestos – which reflects a concern about various dangers posed by the technology and those who control it.

And then, all on its own, there’s the cloud, which is revelatory and most likely to appeal to those who are concerned about the environmental and social implications of AI.

Lots more metaphors are available, though. Indeed, as Melanie Mitchell of the Santa Fe Institute has pointed out, the field of AI itself is awash with them. AI systems, for example, are called “agents” that have “knowledge” and “goals”. LLMs are “trained” by receiving “rewards”. They “learn” by “reading” vast amounts of human-generated text, and “reason” using a method called “chain of thought”. Given this, is it any wonder that many humans – already prone to anthropomorphise animals and even corporations – are liable to do the same with machines that seem able to converse sympathetically with them?