It takes a lot of time and testing to bring a new drug to market. Exactly how much is the subject of reasonable debate, but there being some right-ish amount greater than zero is thought to be self-evident. Not so with potentially self-harmful technologies.

Those of us who use AI are something like trialists of randomised, uncontrolled experiments on ourselves. Of course, that’s exactly what we did with social media, and that went… well let’s see, tallying up the psychological, political, and social fallout… catastrophically. Still, we’ve done little to prevent a second bold step onto a new rake.

So, if it’s up to each of us to strip-test and measure our own doses, we could use some help. We asked our roundtable of AI experts for advice on how to use LLMs in the healthiest way, what AI can give us in an ideal world, and who should take responsibility for AI & us.

Notably, no one is underestimating the risks as we did with social media, No “change the world” rhapsodizing. They know it’s all too easy to take the wrong fork and find oneself in darkness. One roundtable member said, “AI psychosis is much, much worse, more widespread and more subtle than people think. I have seen Nobel prize-level scientists fall for these kinds of things within their own area of expertise.”

The Observatory: AI Roundtable

David Astor, the great 20th century editor of The Observer, said that journalism is too interesting and too important to be left to journalists alone. In that spirit, the Observatory is a network of thoughtful people, organised by virtual roundtables of expertise and experience, who help us all make better sense of the world together.

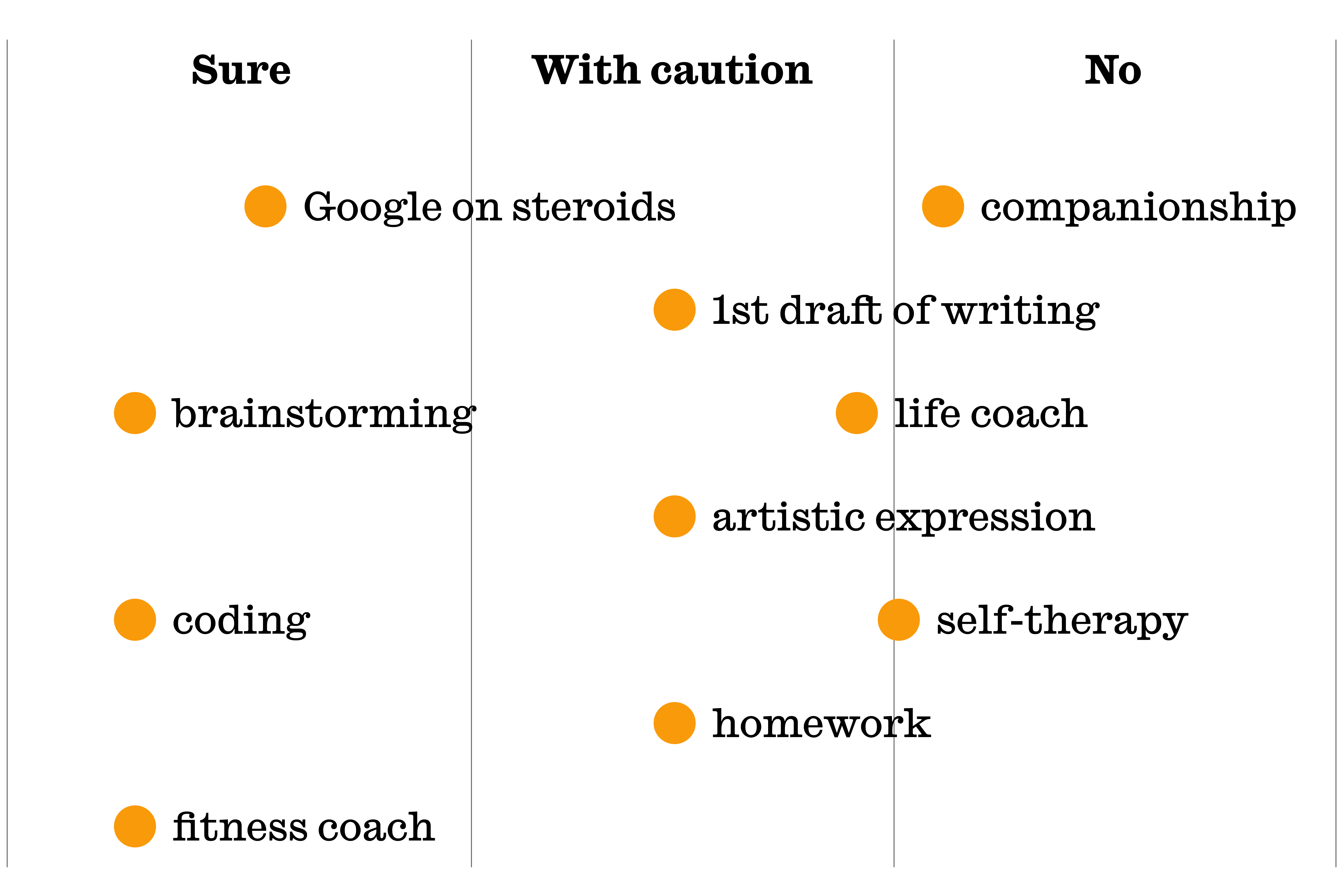

Thinking of family and friends, what would be your recommendation for when and when not to use AI?

Care to share anything else based on how you answered?

“Ensure that you are not breaking copyright law; ensure that the AI you are using is reputable; and not to take anything you are told too seriously. Above all, don’t get married to it.”

“Even hardened expert AI safety researchers can be sucked into a delusionary spiral by these systems. Highly recommend Jon Oliver’s recent piece on chatbots.”

“It depends on the individual. If they are skeptical and curious it’s fine. If they are gullible and vulnerable it’s dangerous.”

“Workslop is becoming an issue and this applies to workplace, university and school use. We all need to use it but in the appropriate way. Professor Jeff Hancock’s new paper on AI adoption - path to augmentation and path to automation is a very helpful guide”

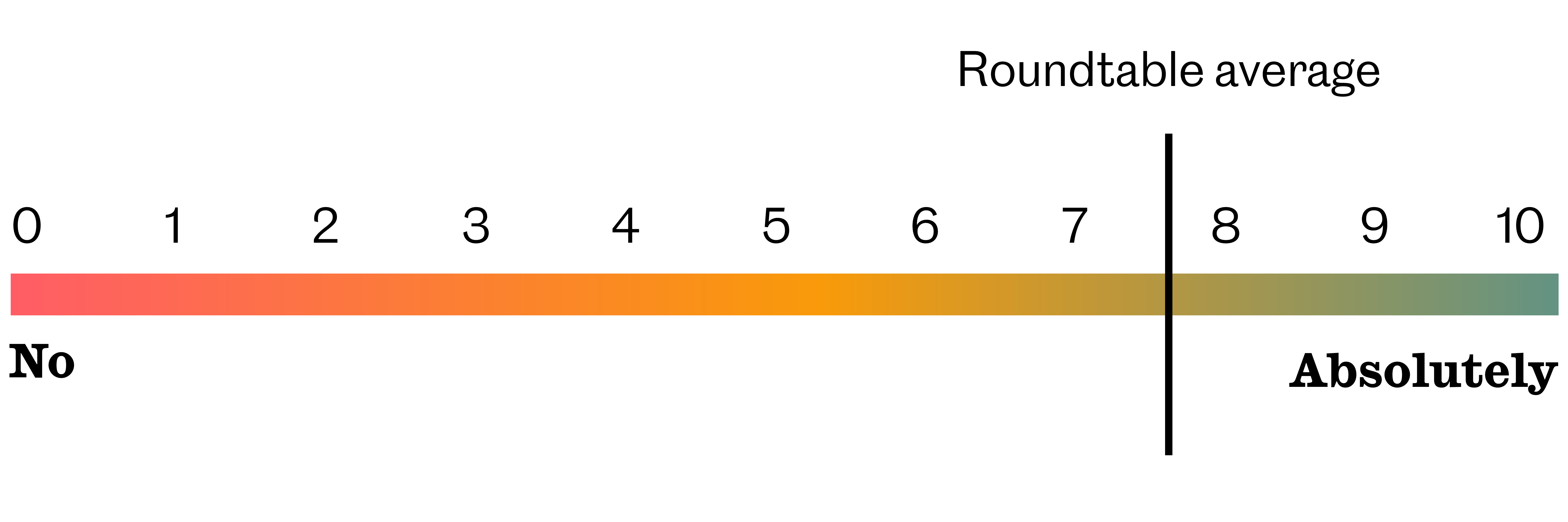

As the AI revolution unfolds, should we start thinking about keeping our minds in shape?

(0 for no, 10 for absolutely)

Roundtable average: 7.6

Finish the sentence if you’d like: Ideally, the incredible efficiency of AI will give us much more time for:

“Being better humans - care, focus, interest, love….”

“Suing AI companies”

“It won’t give us more time in the long run. The shit we deal with will just be different.”

“Building systems of governance that give us the kinds of lives and societies we want.”

“Creative thinking”

Many worry we’re repeating mistakes from the initial proliferation of social media. Namely, not reckoning with psychological, cognitive, social, and political consequences. Who should be taking responsibility for addressing this concern?

“Government regulation of AI is currently a prisoner’s dilemma. Everyone knows that this needs to happen but everyone is scared to make too strong a move in case it leads to relative weakness in the “race” for innovation and the much-blessed AGI [artificial general intelligence].”

“Obviously, patently, without any shadow of a doubt, the AI platform companies themselves. These tech companies keep telling us that legislation can’t keep up with tech. They’re right. So therefore they have to self-regulate.”

“The businesses who run the models and most importantly the funds who invest in them and often encourage the most egregious behaviour.”

“Only governments and courts can directly change corporate behavior. We can help people protect themselves to some extent, and parents can help protect their children (if they understand the risks), but isn’t this why we have governments?”

“Individuals, but government is doing the UK a massive disservice by slavishly following tech bro advice.”

“Everyone (governments/ civil society/ public at large/ multinational organisations)”

“De jure, this is squarely the purpose of the regulatory apparatus of government. This is a classic common goods problem, where unregulated markets will simply exploit the safety commons until we all suffer, and only regulation uniformly and strictly enforced can solve the dilemma. De facto, our governments are in many ways crippled, and we as citizens of democracies have to stand up and help our governments be empowered to do what we need of them.”

To learn more about Observatory round tables email observatory@observer.co.uk

Newsletters

Choose the newsletters you want to receive

View more

For information about how The Observer protects your data, read our Privacy Policy