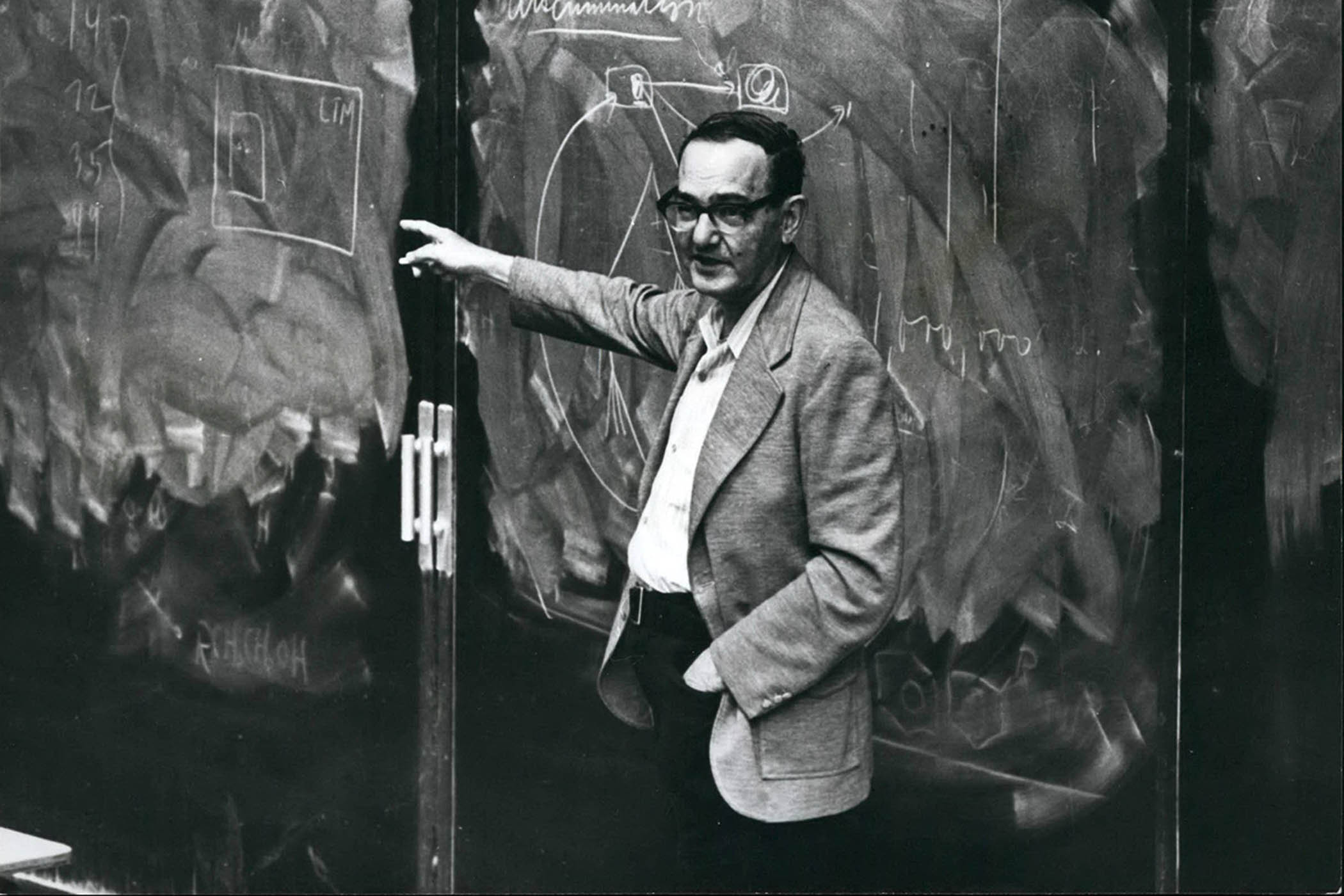

Way back in 1971, the economist (and Nobel laureate) Herbert Simon made an eerily prescient observation. The world was entering an era when information was becoming abundant and he was wondering what the long-term implications of this “infoglut” would be.

His answer was simple. “A wealth of information”, he said, “creates a poverty of attention and a need to allocate that attention efficiently among the overabundance of information sources that might consume it.” Interestingly, Simon was thinking about this before the internet that we use today existed.

But its military predecessor, the Arpanet, was up and running, and Carnegie Mellon University, where he worked, had in 1971 become one of the first 15 nodes of that network. So he doubtless had some insight into what was coming down the line.

His seminal thought – that attention would become the scarce (and therefore valuable) commodity of the networked world – turned out to be the basis for a huge industry built on capturing and monetising human attention. Mark Zuckerberg, Elon Musk, the Google founders and a host of other tech bros have made billions from it. And we’ve been living with the consequences of that ever since.

But now there’s a new general-purpose technology on the block – AI – and it raises the same question that preoccupied Simon decades ago: what will be superabundant in an AI-dominated world? And what will be scarce? The most intriguing attempt at an answer I’ve seen so far has recently come from a trio of American researchers in a paper with the enigmatic title Some Simple Economics of AGI.

“For millennia,” they write, “human cognition was the primary engine of progress on Earth.” But, as AI “decouples cognition from biology”, the marginal cost of cognition (AKA artificial “thinking”) falls to near zero, absorbing any human labour that is capturable by metrics – including creative, analytical and innovative work – so the binding constraint on growth will no longer be intelligence but what the authors call “human verification bandwidth”. Namely, the capacity of humans to validate, audit and underwrite responsibility when machine-powered cognition is superabundant.

Since all three authors hail from US business schools, the gung-ho literary style of their 113-page vision of the future was predictable. An example is their certainty that any kind of cognitive (white collar) work that can be metrically evaluated will be taken over by machines.

Newsletters

Choose the newsletters you want to receive

View more

For information about how The Observer protects your data, read our Privacy Policy

Likewise, their conception of “cognition” is, well, brusque. It’s “a second, alien form of cognition”, they write, “one trained not by the physical friction of survival, but by compressing, predicting, and recombining the sum total of digitised human thought. This new intelligence does not inherit our muscles, our hormones, or our evolutionary priors. It inherits something else: a vast latent map of what our species has written, drawn, coded, and measured.”

Despite its shortcomings, what’s useful about the paper is that it could serve as an antidote to imaginative failure. Most of us are blundering around in the same kind of mist that enveloped Simon’s audience in 1971. We think of AI as either an augmenter of human capability or a replacement for it.

The paper’s authors nudge us to imagine a radically different world; one in which artificial “cognition” is superabundant and on tap, but where the capacity for human oversight has become not only scarce and valuable but often essential, not least because that alien “cognition” has flaws, hallucinates and makes mistakes.

In such a world, the economy’s binding constraint would no longer be concerns about how smart machines can be, but how much of their output humans can meaningfully check.

The inescapable implication is that to prevent avoidable catastrophes with this new alien intelligence, we need to have humans in the loop; people who are hired not because they know how to use contemporary AIs but because they have been trained in critically overseeing the machines’ output.

Paradoxically, at the moment, we seem to be heading in exactly the opposite direction, with employers viewing the technology as a way of getting rid of people. And – worse still – as a justification for not hiring young people, when they should be thinking of building a talent pipeline of staff who know how verify and validate AI outputs and generally keep the technology under control.

If Simon were still around (he died in 2001), I guess that, in his laconic way, he’d say that the bottleneck is no longer thinking: it’s checking the thinking. And if you’ve become a proud user of AI in your business and regard verification as just a tiresome optional expense, it may be worth asking a lawyer about liability. And maybe taking out insurance.

What I’m reading

King trumps joker

How Charles III Quietly Filleted Donald Trump in His Own House is a bitingly sharp commentary by Dean Blundell on the monarch humorously outfoxing the US president.

Labour exchange

An interesting examination of employment by Rishad Tobaccowala is Jobs Are a Phase Work Is Going Through.

Prophets of doom

Muhammad Aurangzeb Ahmad’s On Teaching Machines to Predict Death is an intriguing look at “automation bias” in healthcare.

Photograph by Keystone Press / Alamy Stock Photo