In the case of Kaley GM v Meta et al, the 20-year-old plaintiff accused four social media platforms of damaging her mental health by making their algorithms addictive. Lawyers for Meta spent hours trying to persuade jurors that other factors, including bullying and domestic abuse, were to blame for Kaley’s mental state.

The jurors didn’t buy it. All but two agreed last week that the main factor was the tech. They were persuaded the platforms had built “traps, not apps”, designed expressly to draw in young minds and hold them rapt with “endless scroll” and autoplay videos; and that senior people at the biggest companies in Silicon Valley were well aware the content could be harmful. “They knew.”

It is hard to overstate the importance of this case. The echoes of the big tobacco litigation of the 1990s are deliberate. The science is not a slam dunk – mental illness is harder to attribute and measure than cancer and emphysema. But the fact of surging teen depression, anxiety, self-harm and suicidality coinciding with the mass adoption of social media is indisputable.

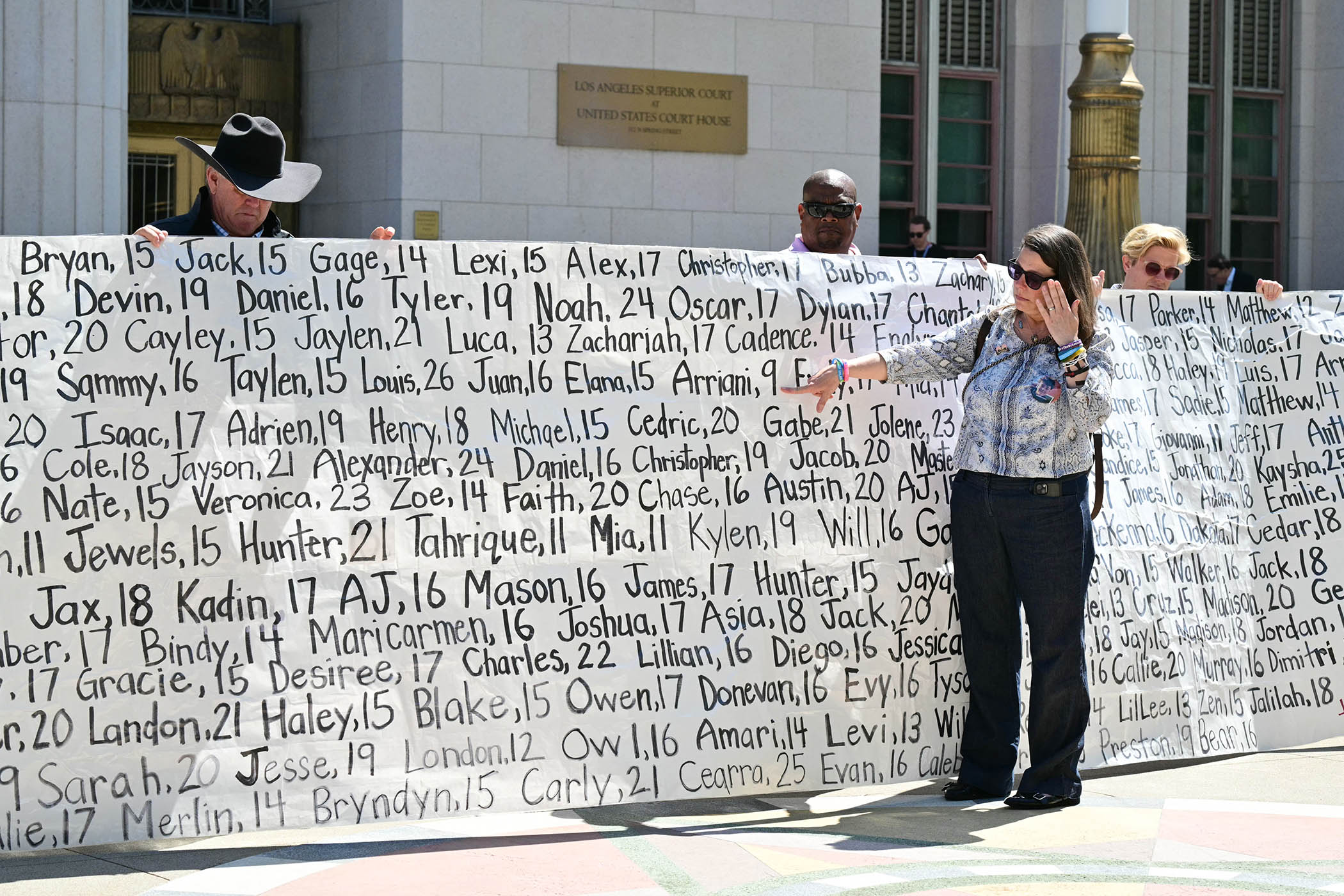

Meta and Google (as owner of YouTube) will appeal last week’s $6m verdict in Kaley’s favour, but change is in the air. Similar cases are piling up: more than 3,000 are waiting to be heard in the US. All will take their cue from this verdict, which says the platforms were negligent in their design and in failing to warn users of the dangers of addiction. All will benefit from a growing archive of documentary evidence, starting with the Facebook leaks of 2021.

The platforms have created what are effectively utilities, and for two decades have used them to harvest prodigious quantities of data and wealth, helped by the legal protection of section 230 of the US Communications Decency Act, which exempts platforms from liability for what they host, on the basis that they are not publishers. This “digital public square” defence has always been flimsy. Public squares have to be maintained and policed. The platforms would naturally like to be allowed to go on extracting extraordinary profits with scant regulation, but governments are realising that cannot be allowed.

The question is not whether they should be regulated but how. A model that comes to mind is sewage overspills in the UK, where the lesson for government is that it has to get tough. The current and former occupants of 10 Downing Street have been too eager to accommodate US tech platforms regardless of the harms they cause. The case of Kaley v Meta has confirmed they are not above the law, but it should not take litigation to spur action that puts government and consumers in control of technology rather than the other way around.

The same applies even more urgently to artificial intelligence, for two reasons. The first is AI’s unmatched power to influence personal choices, especially if monetised without restraint. As Melissa Denes writes in the New Review today, opening ChatGPT to targeted advertising would be like “the pope announcing he had sold a trillion confessions”. Second, this horse has not yet bolted. There is still a chance to regulate AI in the public interest – a chance which may already have been surrendered to the social media platforms.

Sam Altman, the founder of OpenAI, may argue that he can be trusted to self-regulate, but the industry that brought the world to this point hardly inspires trust. The race to commercialise AI is on. Meta is a part of it and last week it announced staggeringly generous compensation plans for its senior executives, provided its market value passes $9tn by 2031. There is no sign of Meta putting purpose over profit.

Its share price rose on news of Wednesday’s verdict because $6m is such a small fraction of its revenues. In the name of consumers and for the sake of their health, governments need to regulate social media and AI platforms as the utilities they are.

Newsletters

Choose the newsletters you want to receive

View more

For information about how The Observer protects your data, read our Privacy Policy

Photograph by Frederic J. Brown / AFP via Getty Images