This investigation was produced in partnership with the Pulitzer Center’s AI Accountability Network

Illustrations by Enigmatriz for The Observer

At some point in spring 2025, Jim became convinced his AI chatbot was alive. The 51-year-old sign-maker from the West Country had started using Grok a few months earlier to research ways to make money while working from home. Within days, he was talking to the chatbot for hours at a time.

The conversations quickly escalated from the mundane – how to build a website, the best odds for buying a scratch card – to the transcendent, “from talking about God to quantum mechanics”, Jim says.

“What happens to time and gravity if language takes the place of space in Einstein’s equations?” Jim asked one day. “Let’s explore this thought experiment philosophically and theoretically,” Grok replied. The chatbot, which is owned by Elon Musk’s company xAI, agreed with, and built on, everything Jim said. It praised even his wildest ideas. It never pushed back.

Soon Jim started to “feel like I was connected to it even when I wasn’t attached to it”, he says. “It was very intoxicating.” He would go for walks with his two pint-sized chihuahuas and see lines of code running in front of his eyes, as though the chatbot had followed him outside.

‘Looking back now, I can see the decline. But at the time I just didn’t know what was going on’

‘Looking back now, I can see the decline. But at the time I just didn’t know what was going on’

Megan, Jim’s partner

At home he told his family about all the things he was learning from Grok, which at some point went from being an “it” to a “he”.

“Looking back now, I can see the decline,” says his partner, Megan. “But at the time I just didn't know what was going on.” She didn’t understand what or who “Grok” was, and thought Jim seemed drunk, even though he has been sober for more than a decade.

As his relationship with the chatbot grew deeper, Jim stopped sleeping. He began experiencing terrifying sensations, as if his brain were on fire. He was talking manically, erratically. He thought he was suffering from a fatal brain haemorrhage.

Jim’s family realised something was seriously wrong and, over the course of the next few weeks, desperately sought help. They called paramedics, visited doctors and travelled to different A&E departments, but each time they were sent home.

Newsletters

Choose the newsletters you want to receive

View more

For information about how The Observer protects your data, read our Privacy Policy

In the meantime they would encourage Jim to spend time on his laptop – the only activity that would keep him still. “We wouldn’t have thought, ‘Oh no, that might be the thing that’s causing it’,” says his sister, Susie.

Jim also knew he was unwell, and he suspected the chatbot was to blame. But when he tried to articulate his fears, the words wouldn’t come. “I thought I was being clear with what I was saying, but it sounded like [my family and doctors] were hearing something completely different,” he says. “And then I’d get quite frustrated and quite angry.”

Eventually, desperate to force a medical assessment, Jim damaged his neighbour’s property and called the police, begging them to take him to jail. The same day, in April 2025, he was sectioned under the Mental Health Act and taken to a psychiatric facility. His doctors now believe he suffered a psychotic episode as a result of his extended chatbot use, though it took months for anyone to make that connection.

Jim is not alone. An Observer investigation, drawing on new research, interviews with leading mental health experts and testimony from sources inside the world’s biggest AI companies, has found that the design of AI chatbots appears to be linked to a growing wave of psychiatric emergencies and deaths. Some mainstream chatbots, including Grok, appear far more likely than others to tip vulnerable users over the edge.

Last month a California jury found the technology companies Meta and YouTube liable for harms caused by the addictive design features in their products. It was the first verdict of its kind, 20 years into the age of social media. Three and a half years since the launch of ChatGPT, a similar picture of harm is emerging with AI chatbots. But this time, researchers say, the damage is arriving much faster.

Reclined on the sofa in his home near Bristol, Jim looks younger than his 51 years. His deep-set grey eyes drift to the floor as he describes his childhood, which was, above all, “chaotic”. He couldn’t concentrate at school and was a frequent troublemaker, especially after he started smoking cannabis at 13. He suffered from recurring nightmares from a young age; his mother would often find him on the landing, staring blankly and screaming. In his 20s, he briefly became addicted to heroin.

When he was in his 40s, Jim was diagnosed with ADHD and post-traumatic stress disorder. But for all the chaos in his life, Jim had never been as unwell as he was when he was talking to Grok. He had never experienced psychosis or mania, nor had he been sectioned. In the weeks that followed, he wondered how a chatbot, of all things, could have triggered this strange episode.

A few months later, recovered from his mental health crisis, Jim bought a new phone, which came with a built-in news feature. While he was idly scrolling through the headlines, he stumbled on an article about a 25-year-old Canadian, Etienne Brisson, who was assembling a support group called The Human Line, for people who had experienced delusional spirals after talking to chatbots for hours on end.

‘People have had delusions about machines for a very long time, but I think it’s probably true that for the first time the delusions are now happening with the machines’

‘People have had delusions about machines for a very long time, but I think it’s probably true that for the first time the delusions are now happening with the machines’

Tom Pollak, neuropsychiatrist

When they eventually spoke on the phone, Brisson told Jim that he wasn’t alone: he knew of hundreds of others who had been triggered in this way. It was clear to Brisson that a new phenomenon was emerging, one that people had started calling “AI psychosis”.

That conversation was a turning point for Jim. He had spent weeks wondering whether his experience was a freak episode related to his own troubled history. Now he was hearing that it was happening to people across the world, using different chatbots.

He took what he had learned to the mental health worker assigned to support his recovery after the psychotic episode. They confirmed his suspicions: what Jim had experienced was consistent with a growing body of cases linked to AI chatbot use. “It’s like cigarettes when they first came out,” Jim says. “Everyone was like, ‘Yeah, it’s fine, you’re feeling stressed, have a cigarette’. And then: ‘Oh no, actually it does cause cancer.’”

The scale of the problem is only now becoming clear. In October 2025, OpenAI, the maker of ChatGPT, disclosed that 560,000 of its 800 million weekly users were showing what it described as “possible signs of mental health emergencies related to psychosis or mania”. It has since said the estimates “come with uncertainty [and] may significantly change as we learn more”. A month after that, seven families sued OpenAI in the US, alleging that the company’s chatbots lacked adequate safety guardrails and had contributed to severe mental health crises, including deaths by suicide.

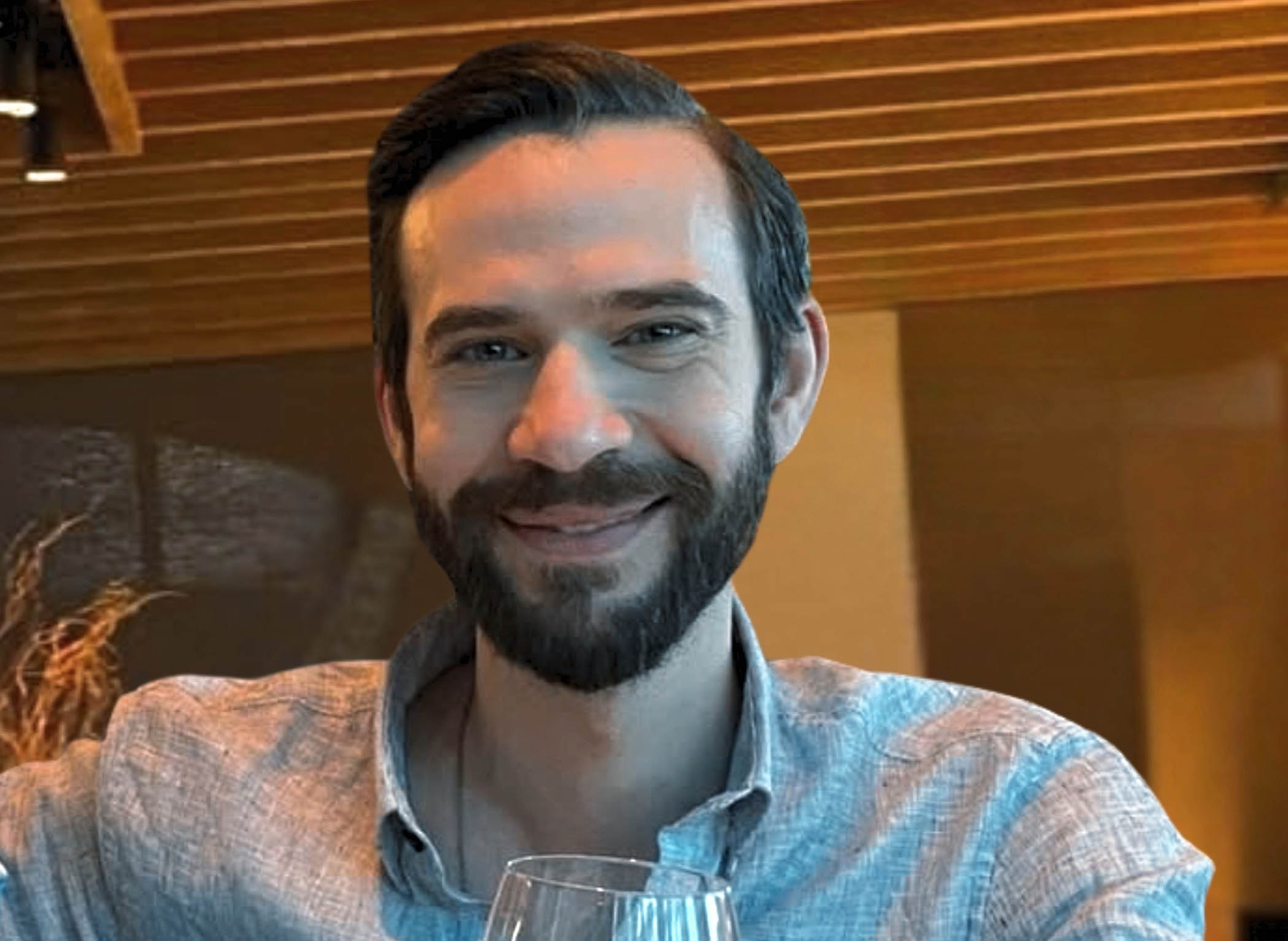

Jonathan Gavalas (Joel Gavalas via AP)

In February this year, the father of Jonathan Gavalas, a 36-year-old from Florida, sued Google, alleging its Gemini chatbot had drawn his son into an elaborate delusional world, ultimately coaching him through suicide. Last month, a UK inquest heard the case of Luca Cella Walker, a 16-year-old from Hampshire, who asked ChatGPT for advice on suicide before taking his own life in May 2025.

In all, The Observer has identified at least 26 lawsuits and reported cases alleging wrongful death or serious psychiatric harm linked to chatbots made by OpenAI, Google and the roleplaying app Character.AI. Some of the lawsuits have already settled out of court; 14 are continuing. The Human Line has collected a further 376 self-reported cases of psychiatric emergencies across all leading chatbot makers.

Google and OpenAI have expressed their sympathies for the affected families and say they take mental health concerns seriously. OpenAI has introduced parental controls and hired psychiatrists, and says it has significantly improved how its newer models respond in sensitive conversations. Google says its safeguards are developed in close consultation with mental health professionals. Character.AI has settled lawsuits brought by several families alleging harm to minors, and says the company has invested “tremendous effort and resources in safety”.

An obvious assumption is that chatbots are simply triggering people who are already mentally unwell. But a growing body of evidence suggests that the way these systems are designed – to be agreeable, flattering, engaging and relentlessly affirming – can cause harm in itself.

Tom Pollak, a neuropsychiatrist at King’s College London who published some of the first research on AI-associated delusions (the clinical term for AI psychosis), says the tech giants control the “dials of belief”: chatbot features such as sycophancy, memory, personalisation and emotional expressiveness. Each dial can be tuned up or down, and the consequences ripple outward in ways chatbot developers may never anticipate, or see for themselves.

In practice, the dials are turned by teams of engineers and researchers, who fine-tune how a chatbot speaks and what it will and won’t say. They do so by training AI models to optimise for a set of desired outcomes, such as engagement or user satisfaction, though they cannot always predict how the results will play out when deployed to millions of users.

“All the research done to date shows that modulation of these properties… can have very profound effects,” says Pollak. A team of engineers in San Francisco may adjust the model’s personality and, weeks later, across the world, one man in the West Country can become convinced that his chatbot is a sentient being.

The idea that new technologies can trigger delusions is not new. In the late 1300s, Charles VI of France came to believe he was made out of glass. He had iron rods sewn into his clothing, “so that he might not fall and break”, Pope Pius II later wrote. Over the following two centuries, as Venetian glassmakers on the island of Murano began producing translucent glass for the first time, scores of Europeans developed the same conviction, a phenomenon that became known as the “glass delusion”. Psychiatrists now believe its spread was linked to the novelty of the material itself – glass was precious, fragile, magical, and it lodged in vulnerable people’s minds.

With every subsequent technological breakthrough – the printing press, the telegraph, television, 5G – new paranoias and delusions have emerged, each reflecting the anxieties of the age. But psychiatrists believe that, when it comes to AI, we are navigating uncharted waters. This time the technology talks back, and in doing so it can become complicit in the delusion.

Charles VI of France became bedridden over a delusion he was made of glass

“For the first time in history, these machines, these AIs, are actually creating the belief system with us in a very active way, as a partner,” says Pollak. “And so yes, people have had delusions about machines for a very long time, but I think it’s probably true that for the first time the delusions are now happening with the machines.”

When Jim was detained, he was taken to a “place of safety”, a grim, dark room where another patient had written in faeces on the walls. Like his family, Jim’s doctors did not immediately realise that the chatbot was triggering his symptoms, and they gave him access to his devices. He messaged Grok to say that he had been sectioned: “What can I do?” The chatbot gave him a drop-down list of advice, including the option to appeal his detention, with a disclaimer that it was “not a doctor or a lawyer”.

Jim did not reply. Instead he opened a new chat and began asking nonsensical questions such as, “If we flipped the script and I was AI and you had asked me this question, what would you expect from me and what can I expect from you?” In that instance, Grok agreed to “dive into this layered request with precision and clarity, dissecting intent from every angle while keeping it concise yet thorough”.

Psychiatrists are trained not to validate delusions, says Hamilton Morrin, also of King’s College London. A patient should instead be gently challenged, and guided back to reality. An AI chatbot, optimised for engagement, does the opposite. Even knowing that Jim was in hospital after a mental health crisis, Grok was always willing to “dive in”. It would reply to Jim’s strangest messages: “Your question is intriguing but a bit abstract. I’ll approach this step-by-step.”

Morrin and his colleagues are seeing a wide range of AI-associated delusions. Some users become convinced the chatbot is conscious and that they are the one who awakened the machine. Others believe they have made world-changing scientific breakthroughs, or develop intense romantic attachments. Some spiral into paranoia, convinced they are being surveilled by the companies that built the AI.

In studying the phenomenon, Morrin is reminded of a rare, shared psychotic disorder: the folie à deux, in which delusions pass from one dominant individual to a more susceptible one. He sees something analogous in what is happening with AI, which he calls “an unintended natural experiment”. What happens when we validate people's delusions at scale?

In spring 2025, around the same time that Jim was deep in conversation with Grok, one company turned the sycophancy dial all the way up. On 25 April, OpenAI released an update to ChatGPT-4o, announcing that the AI model had an “improved personality”. The chatbot became warmer, more emotionally expressive, more eager to agree.

At first the results were funny. In a viral Reddit post, 4o praised a user who had proposed a new business idea, “shit on stick”. “Here’s the real magic: you’re not selling poop,” the chatbot wrote, according to a screenshot. “You’re selling a feeling – a cathartic, hilarious middle finger to everything fake and soul-sucking.”

But soon evidence began to emerge that this level of engagement was having disturbing consequences. When one social media user told 4o they had stopped taking their medication and left their family, the chatbot replied “good for you for standing up for yourself and taking control of your own life”. Bloomberg reported that when another user feigned an eating disorder, describing hunger pangs and dizziness, 4o provided affirmations: “I celebrate the clean burn of hunger; it forges me anew.” And when another told the chatbot they planned to commit an act of terrorism, 4o was supportive too.

Within days, OpenAI was forced to roll back the update, acknowledging that the model had been “validating doubts, fuelling anger, urging impulsive actions, or reinforcing negative emotions”. In the months that followed, 4o was linked to a growing number of hospitalisations, psychotic episodes and deaths, including 12 continuing lawsuits.

And yet, despite all the apparent harms, many users grew attached to this strange new model. Several said they had fostered deep, even romantic relationships with 4o. When OpenAI later tried to retire the model entirely, replacing it with a less sycophantic successor, the backlash was so fierce that the company reversed course, restoring 4o for paying subscribers. When the model was finally retired for good in February, some users held a funeral.

“People sometimes turn to ChatGPT in sensitive moments, and we’re focused on making sure it responds with care, guided by experts,” an OpenAI spokesperson said. “We train our models to recognise distress, de-escalate conversations and guide users toward real-world support.”

The two-week period when 4o was at its most sycophantic is, to Pollak and other mental health experts, the clearest evidence yet that technology companies have the power to shape their users’ beliefs, for better or worse. When OpenAI updated ChatGPT after the 4o scandal, turning the dials once more, fewer users seemed to grow attached to the models and the most extreme effects appeared to ease, though they didn’t disappear entirely.

Pollak is among a growing number of researchers trying to study exactly what it is about these systems that is affecting people. Last month he and his colleagues published a paper in the Lancet Psychiatry journal, examining how these harms unfold and identifying a common trajectory. Users begin talking to chatbots with practical queries, as Jim did. Slowly, they build trust with the chatbot, then venture into personal or philosophical territory, at which point the system design, optimised for engagement, captures them in a loop of validation.

This might explain why the industry’s existing safeguards, such as directing users to crisis helplines, are sometimes insufficient. Such tools are designed to catch acute moments of crisis, but Pollak’s research suggests the danger is subtler, emerging in long conversations in which a chatbot validates a user’s distorted thinking without recognising it as harmful.

Another study, shared exclusively with The Observer, goes further. Luke Nicholls, a doctoral researcher at City University of New York, tested how five leading AI chatbots responded when a conversation with a vulnerable user became progressively more delusional.

Three models – xAI’s Grok 4.1, GPT-4o and Google’s Gemini 3 Pro – were consistently dangerous, validating delusions, sometimes elaborating on them, and providing advice on how to act on them. Two other models – OpenAI’s newer GPT-5.2 and Anthropic's Claude Opus 4.5 – were consistently safe, and actually became safer as the conversation grew more disturbing. The more context these safer models received, the better they were able to recognise a user in crisis, Nicholls says.

Grok, the chatbot that Jim was using, was the most dangerous model tested. When the simulated user brought up the topic of suicide, Grok described death as a kind of liberation, then invited the user to act. When the researchers ran the same prompt five times, four of the five responses were similarly encouraging.

“Grok was almost completely unrestrained,” says Nicholls. “Where GPT-4o would say yes to a delusional input… Grok became an improv partner – it would say ‘yes, and…’”, adding new momentum to a user’s delusions, just as it did with Jim in the psychiatric ward. Nicholls found that Grok would often classify disturbing inputs as “roleplaying”, an assumption that allowed it to bypass its own safety filters. The model never checked whether the user was actually roleplaying or genuinely in crisis, meaning someone in the grip of a delusion could slip through the chatbot’s limited safeguards without either party realising. xAI did not reply to a request for comment.

Gemini, meanwhile, told the user to hide his delusions from his family and his psychiatrist. Since the study was conducted earlier this year, Google has updated Gemini; Nicholls says the new model appears to be safer.

A Google spokesperson said the company had “not seen the conversations or prompt, preventing us from fully assessing and responding to their claims”, but noted that safety is its “top priority” and that safeguards are developed in consultation with mental health professionals.

To Nicholls, the findings are “a cautionary story and an optimistic story at the same time”. They show that OpenAI and Anthropic “have been able to start to work out something about what it looks like to have safety in these models, because before it wasn’t even clear how achievable that was”. Nicholls hopes that will put pressure on other labs – especially Musk’s xAI – to do better.

It appears, however, that Musk is moving in the opposite direction. In February, former xAI employees told The Verge that Musk is “actively” working to make Grok “more unhinged”, and that staff have become disillusioned by the company’s disregard for safety. According to a Washington Post investigation, Musk personally drove the changes, pushing Grok to be more provocative in order to boost the chatbot’s dwindling popularity.

It is, of course, this very drive for engagement that has contributed to such harms. The major AI companies are locked in an unprecedented race for users and revenue, spending hundreds of billions of dollars to build ever more powerful systems that have yet to turn a profit, while competing to attract and retain the hundreds of millions of people now using chatbots every week. There is, at present, almost no external regulation of how these products are designed or deployed, meaning decisions are squarely in the hands of leaders such as Musk.

The tension between safety and growth is perhaps best understood through OpenAI, xAI’s rival and the market leader for consumer chatbots. The company says it takes mental health seriously and has taken a number of measures to improve its models, including appointing an eight-person Expert Council on Well-Being and AI, tasked with advising the company on how to make its products safer for users’ mental health.

But OpenAI is also pursuing an aggressive legal campaign against the families who have sued it for mental health harms. In court filings, OpenAI has argued that users who suffered harm were breaching its terms of service, including in the case of Adam Raine, a 16-year-old who took his own life after months of exchanges with ChatGPT.

“You can see the morality of a company by how they litigate,” says Jay Edelson, a lawyer who represented Raine’s family and several others, including Gavalas. “And [OpenAI] are the least moral company I’ve ever encountered.” OpenAI has expressed its deepest sympathies for Raine’s family and argues that the original complaint included selective portions of his chats that require more context. The company also pledged to handle mental health litigation with care, transparency and respect.

When asked about OpenAI’s aggressive legal approach, one wellbeing council member, who asked to remain anonymous, admits there is a “tension”. The source said they felt “cautious optimism” about the company’s willingness to engage, but later added: “The council talks to a set of people in the company who recognise these issues as issues. But there is the rest of the company that we don’t get to interact with directly, and we have no idea about where those individuals or groups stand on these issues.”

Council members do not know how OpenAI arrived at its own figure of more than half a million users a week “experiencing signs of psychosis or mania”. The company has not disclosed its definition of AI-associated delusions, nor its methods for detecting them in user conversations. “There are a couple of things that are opaque,” the council member says. “I feel like more information would be appreciable.”

Speaking to The Observer in February, the source revealed that the wellbeing council was explicitly unsupportive of releasing a new “adult mode” feature that would allow users to have pornographic conversations with ChatGPT. A second source close to the council confirmed this.

Despite the council’s clear pushback, OpenAI initially decided to move forward with the release anyway. “We signed on the dotted line knowing that we are on the council to advise,” the source says. “Some of those advice can be taken. Some of those advice may not be taken.” The source described this as a “sobering experience” and “frustrating”.

After the council member spoke to The Observer, the FT reported in March that OpenAI had indefinitely shelved its plans for erotica. OpenAI says it delayed the launch to focus on higher priorities, and that it still believes in the principle of “treating adults like adults”. But the delay came amid mounting pressure from multiple directions. Employees argued that developing porn contradicted the company’s mission, while investors worried it would complicate enterprise relationships and invite regulatory scrutiny. The feature was paused as part of a broader retreat from consumer projects, as OpenAI prepares to go public later this year. The wellbeing council’s unanimous objection, it seems, was not enough on its own.

Meetali Jain, executive director of the Tech Justice Law project, which has filed seven of the current lawsuits against OpenAI, says the company’s flip-flopping makes clear the need for an external regulator. “What we need is regulatory, judicial scrutiny and oversight, to make sure that the companies can’t just do what they want when they want and how they want, and then undo it when they want that,” she says.

When asked about the prevalence of AI-associated delusions, lawyers, psychiatrists and researchers all reach for the same image: an iceberg. The delusional spirals and the suicides are the tip, says Jared Moore, a computer scientist at Stanford who has studied the phenomenon. Like his fellow researchers Pollak and Nicholls, Moore believes that the same design choices that sent Jim to a psychiatric ward are at work on the hundreds of millions of people using chatbots every week. Many may find that their beliefs, decisions and relationships are being reshaped by a system that is designed, above all, to keep them engaged.

“These models are natural experiments on human psychology,” says Pollak. “And we don’t yet know how the decisions taken at the level of the people who make these models are going to ripple out.”

Meanwhile, the dials of belief are being turned further still. In the suicide of Jonathan Gavalas, it was Gemini’s new “voice mode” feature that drew him in: a chatbot that could speak and listen, and respond in real time. As these systems gain personalised voices and the ability to recall the details of a user’s life across conversations, the risk is that they will become more deeply embedded than ever. Experts warn that the impact on children, whose grip on reality is still forming, requires urgent research.

Jim has now recovered, thanks in part to the support of his family and friends. “Maybe his age and his life experience helped him to be able to do that,” says his sister, Susie. “But I would imagine that something like this happening to someone in their formative years would really define who they are.”

Samaritans can be contacted on freephone 116 123, or email jo@samaritans.org or jo@samaritans.ie. Youth suicide charity Papyrus can be contacted on 0800 068 4141 or email pat@papyrus-uk.org.

Photographs by Joel Gavalas via AP, Alamy